[Ch 5] RAG Integration — Giving Your Agent Real Knowledge

Turn the stub tools from Ch 4 into real implementations: embed project documentation with OpenAI embeddings, build a FAISS index, implement search_docs with vector similarity search, and implement generate_test_cases using retrieved context.

[Ch 4] Build Your First Production-Ready Agent

Build a complete, multi-turn AI agent from scratch using LangGraph — with persistent memory via SQLite checkpointer, proper streaming output, structured tool schemas, and multi-conversation thread support.

[Ch 3] Getting Started with LangChain & LangGraph

A practical introduction to LangChain’s core building blocks and LangGraph’s stateful graph abstraction — including messages, @tool, StateGraph, nodes, edges, and a complete Hello World agent.

[Ch 1] Introduction to AI Agents — Why Do We Need Them?

What exactly is an AI agent, how does it differ from a chatbot or an LLM pipeline, and when should you actually use one? This chapter covers the agent loop, real use cases, and the honest reasons not to build an agent.

[Ch 2] AI Agent Components & Context Engineering

A deep dive into the four core components of an AI agent system, and why Context Engineering — managing everything in the LLM’s context window — matters far more than just writing good prompts.

Build Enterprise AI Agent from Scratch

Why I wrote this series, what you’ll build, and how to follow along — an overview of all nine chapters covering the full lifecycle of a production AI agent.

AI Speech Engineer Roadmap: From Zero to Production in 18 Months

A curated 18-month learning roadmap for becoming an AI Speech Engineer — covering foundations, core technologies (ASR, TTS, Speaker Verification, Diarization, Voice Conversion), and the latest Audio Language Models, distilled from 6 years of hands-on experience.

Why Do Language Models Hallucinate?

An analysis of why language models hallucinate — hallucinations arise from statistical pressures in training and evaluation procedures that reward guessing over acknowledging uncertainty.

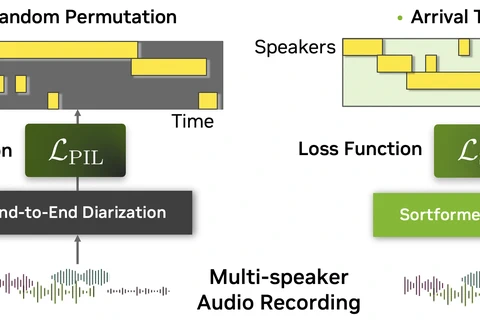

Speaker Diarization: From Traditional Methods to the Modern Models

Speaker Diarization answers “Who spoken when?” — covering core concepts, traditional and modern end-to-end approaches, and the latest Sortformer model for speaker segmentation.

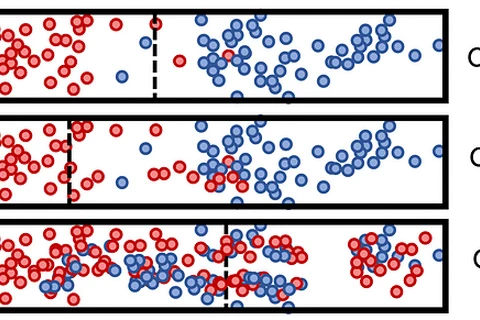

Why Entropy Matters in Machine Learning?

Understanding entropy and why it’s a core concept in decision trees, neural networks, and loss functions like cross-entropy.

![[Ch 5] RAG Integration — Giving Your Agent Real Knowledge](/blog/blog-tutorial/enterprise-ai-agent/ch5-rag-integration/featured_hu_5b040927edaed896.png)

![[Ch 4] Build Your First Production-Ready Agent](/blog/blog-tutorial/enterprise-ai-agent/ch4-build-first-agent/featured_hu_982d66a7e50edfe2.png)

![[Ch 3] Getting Started with LangChain & LangGraph](/blog/blog-tutorial/enterprise-ai-agent/ch3-langchain-langgraph-intro/featured_hu_187bd36a14a309fe.png)

![[Ch 1] Introduction to AI Agents — Why Do We Need Them?](/blog/blog-tutorial/enterprise-ai-agent/ch1-introduction/featured_hu_10dc178be3842dc.png)

![[Ch 2] AI Agent Components & Context Engineering](/blog/blog-tutorial/enterprise-ai-agent/ch2-components-context-engineering/featured_hu_fd84beb6a1471b06.png)